When it comes to shaping public opinion, misinformation is a major part, making media literacy tools essential. Two educational tools that have been created to educate people more on misinformation are Project’s RumorGuard and the interactive game Bad News. Both are educational tools to teach people about misinformation, but one does so through analysis and real-world examples, while the other uses game simulation.

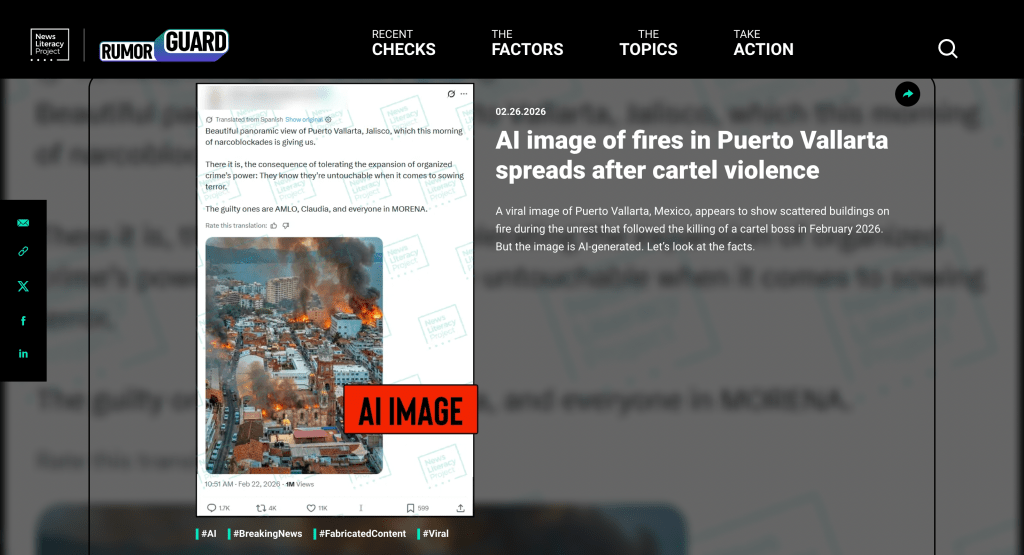

The first tool is RumorGuard, an online platform that analyzes viral claims and determines whether they are true, false, or misleading. Key factors they use to break down each story are evidence, context, and authenticity. One post that caught my attention was an AI-generated image claiming to show fires in Puerto Vallarta after cartel violence. The post to me at first looked so real, but RumorGuard carefully explained that even though the image looks realistic, it was actually fabricated using AI to spread real news.

This example shows how RumorGuard educates users to question the visual content we are seeing. Just like how we were doing in the module this past week. It helps users to understand that just because something looks real does not mean it is accurate. The tool is so effective because it clearly takes the time to teach users the verification steps to show why a claim is misleading or could be misleading if they are unsure. I find it beneficial because I never knew of a website that did this before, and now if I see something online I might think is misinformation, I can go there to check. However, I do see how it can be seen as passive, as users are simply just reading explanations rather than interacting with content.

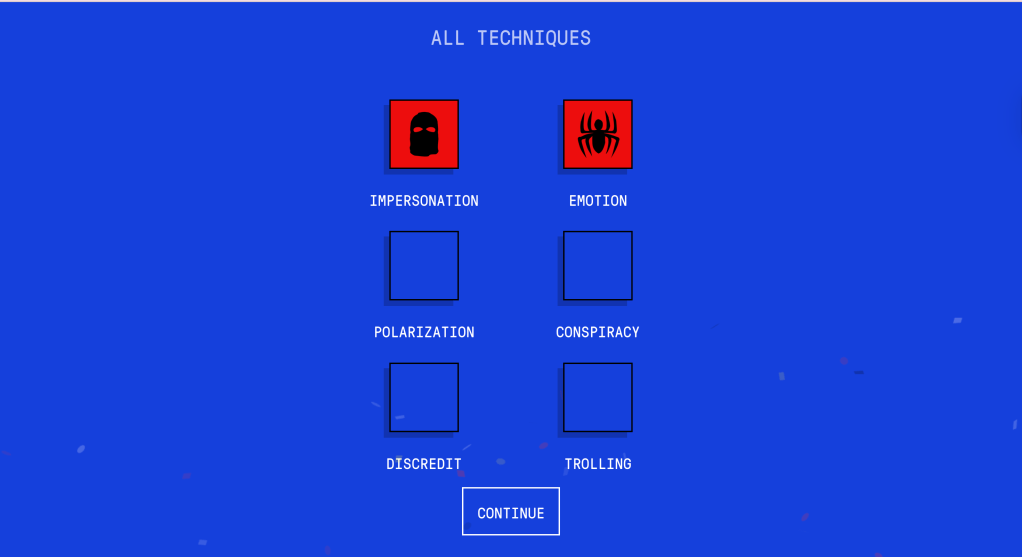

The next tool is Bad News, which takes a more interactive approach. Within the game, players act as creators of misinformation and try to gain followers by spreading fake, misleading content. Players use tactics like emotions, impersonation, and polarization to build influence and gain followers.

Bad News teaches participants how easily it is to create misinformation that people genuinely will believe and interact with. By putting users in control, it shows how strategies like fear, emotions, and bias are used to capture people’s attention. This made the game for me highly important because half of the examples given sound so similar to so many tweets I have seen online. Players get to experience firsthand how simple it is to fool audiences, and I will be using this information to analyze these tactics people are using to spread fake news and misinformation.

Research has shown that false information can spread faster than true information online. A major study found that false news travels faster, farther, and is more likely to be shared than accurate information. People are engaged with their own personal feelings, and if users are creating content that is fake but caters to these people’s emotional reasons, why they believe a post will be spread faster.

People are drawn to content that feels emotionally impactful and real. Psychological research also shows that once people form beliefs, they can be difficult to change because the brain prefers information that confirms existing views and feels familiar.

Educational games and tools are insanely powerful ways to teach about misinformation. Learning becomes more engaging, and users are better equipped to remember what they are learning. However, to be most certain of the misinformation you are seeing online, structured tools like RumorGuard reinforce critical thinking and take you through the steps of catching fake news. When used together, these tools help people become more informed and prepared to conquer all of today’s complex digital media environment, whether it be fake or true.

Leave a comment